The report: A Generative AI Roadmap for Financial Institutions

Published: October 2023

Source: Boston Consulting Group

Why we picked it: Many financial institutions are moving rapidly to embrace the promise of AI, expanding beyond piecemeal pilot programs to applications throughout their organizations. This new report promises banks an actionable framework for success in the next stage of the AI revolution.

Executive Summary

Artificial intelligence offers immense potential to forecast consumer needs and tailor future product offerings based on customer behaviors. As BCG notes, financial institutions now have access to AI toolkits that can transform the entire customer experience and journey.

“In the rapidly changing AI landscape, establishing a firm people strategy is as critical as adeptly navigating the challenges of governance and regulation. And because the technology progresses daily, a forward-looking AI vision is imperative for financial leaders shaping the future.”

However, to make the most of the latest technology, banks will need to be clear on their goals and leverage multiple types of AI in collaboration with human talent. In this report, BCG lays out their roadmap for financial institutions to adopt AI while addressing regulatory compliance and aligning AI development with the bank’s values.

Key Takeaways

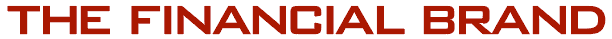

• Predictive AI supports logic, measurement, and calculation whereas generative AI (or GenAI) supports creativity, expression, and holistic perspectives.

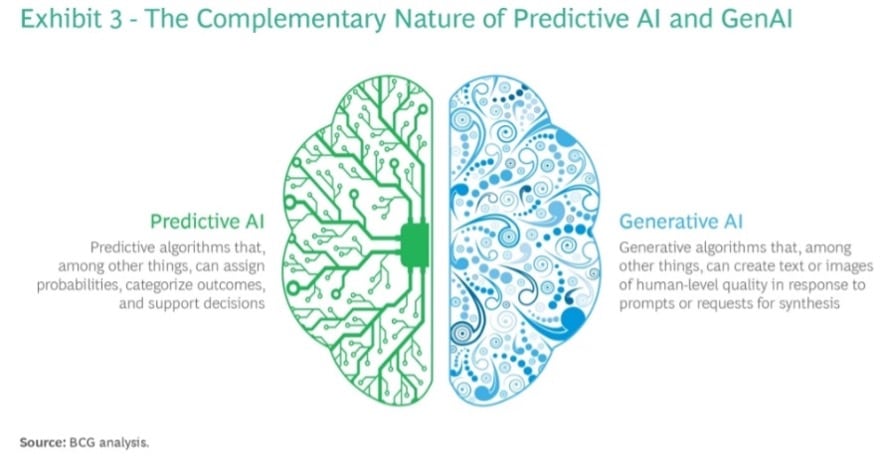

• BCG advocates a “golden pattern process” using predictive AI and GenAI together to process information, decide/evaluate and take creative action.

• To make the most of AI, banks must pilot with experimental discipline.

Accelerate Time-to-Market with Rapid Implementation

Create a sustainable competitive advantage with faster time to market by drastically reducing implementation time.

Read More about Accelerate Time-to-Market with Rapid Implementation

Banking Transformed Podcast with Jim Marous

Listen to the brightest minds in the banking and business world and get ready to embrace change, take risks and disrupt yourself and your organization.

What we liked: The report explained well the differences between GenAI and predictive AI and lays out use cases for how the two can work together. BCG’s proposed “process/evaluate/take creative action” pattern helps illustrate opportunities and use cases.

What we didn’t: BCG tries to tackle a lot of heavy subject matter, leaving the reader wanting more. For example, its framework for responsible AI adoption is interesting, but vague. While BCG has written several broad pieces about responsible AI, a deeper dive is needed to better understand how the application of the this framework might look in a highly sensitive field like financial services.

Things that made us go “Hmm”: BCG warns ominously, “Regulators around the globe have been busy … updating data privacy, liability, and copyright laws for the new technology… [financial institutions] should expect to receive special scrutiny in all three of these regimes, as their products are considered essential to citizens and particularly sensitive.”

Read more:

- How to Stay Out of Trouble With Regulators When Innovating

- After the OpenAI Drama, Should Banks Pause with Generative AI?

Guiding Principles

In recent years, AI has reshaped functions and processes across a slew of industries, from retail and supply chain to healthcare and technology. However, the changes have been incredibly profound in the banking industry. Tech companies and startups have been eager to develop and deploy new solutions, as banks have gold mines of customer data. However, financial adoptions need to consider some guiding principles.

Predictive AI vs. GenAI: There is frequent confusion about AI, BCG emphasizes, with heavy overlap between the definitions of AI, machine learning, deep learning, and generative AI.

Over the past decade, many financial institutions have adopted a form of “predictive AI” for things like risk monitoring, optimal pricing, and product modeling. However, GenAI and predictive AI serve fundamentally different purposes and, in the future, banks will have to learn to use both simultaneously.

Together, predictive AI and GenAI work like the human brain: Predictive AI is the left side of the brain and is used for logic, measurement and calculation whereas GenAI serves as the right side, designed for creativity, expression, and even ideation.

An example: While a machine learning credit risk scoring system may make better lending decisions than most humans, highly complex transactions may be better handled by humans. BCG points out that studies show that GenAI performs best when humans are complementary to its output.

However, when humans act as enhancers, trying to improve the output, they can significantly diminish the value of AI. And a “survival of the fittest” strategy — launching an extensive array of small AI use cases to see which flourish — often yields disappointing results.

To get the mix right, financial institutions need to embrace experimental discipline. Launching small-scale use cases within innovation-driven business areas can be incredibly beneficial. The most effective AI strategy is to perform selected experiments in controlled, laboratory-type testing environments, with near-scientific discipline: Observe, question, hypothesize, predict, test and re-iterate.

An AI Framework and End-to-End Solutions

According to BCG, financial institutions can capture more value by holistically reimagining entire processes end-to-end, rather than try to insert AI into legacy processes built around humans. An end-to-end approach redesigns processes from the ground up, optimizing both AIs and human roles in a collaborative design.

This could involve (for example) using predictive AI to determine the best offer to reach a customer, while leveraging GenAI to create content and design visuals to deliver the offer.

Golden patterns: BCG lays out some “golden pattern process” opportunities for GenAI and predictive AI to work together.

The sequence is to:

(1) Process information

(2) Evaluate and decide

(3) Take creative action.

In this pattern, AI could respond to a customer inquiry, process an invoice, decide on a credit application or monitor an account for money laundering.

Rethinking people and process: Many banks have become so enamored with technology and advancement that they overlook the importance of strategy, people, and process. To adjust to these changes, banks and credit unions must be bold in rethinking people-driven processes and functions with interdisciplinary teams and a more agile structure.

New talent and skills: In the coming years, nearly every human role in financial services will have a relationship with AI. GenAI will have a powerful impact on functions like marketing, customer service, legal, and software development, where automation will support higher-quality service and cost reduction.

Financial institutions accordingly will need an enterprise-level GenAI strategy with a value-added talent plan defining the required skills.

Prioritizing governance: BCG also argues that banks and credit unions need solid governance for AI and GenAI initiatives to mitigate potential regulatory, legal, and reputational risks. For example, there have already been cases of bias against certain customers noted in large language model (LLM) data sets.

In fact, one BCG survey of global executives found nearly 70% said the limited traceability of LLM sources discouraged them from using GenAI. As regulators are still working on AI laws, regulatory uncertainty around AI will prevail for quite some time.

Dig deeper: Lessons from Ally’s Experiment with GenAI Marketing

Creating a framework for responsible AI: In the meantime, banks and credit unions should create their own framework that focuses on existing regulatory compliance and aligns AI development with the bank’s purpose and values. BCG’s “responsible AI” framework has five key components:

• A comprehensive strategy linked to the company’s values, ethical principles, and risk management.

• Governance and oversight by an AI leadership team.

• Rigorous processes to monitor and review products for responsible AI criteria.

• Technology to mitigate AI risks and support responsible AI design.

• A responsible AI culture of understanding among all staff, outlining principles, roles, and responsibilities.