Why 95% of Enterprises Are Getting Zero Return on AI Investment

By David Evans, Chief Content Officer at The Financial Brand

Simple Subscribe

Subscribe Now!

Executive Summary

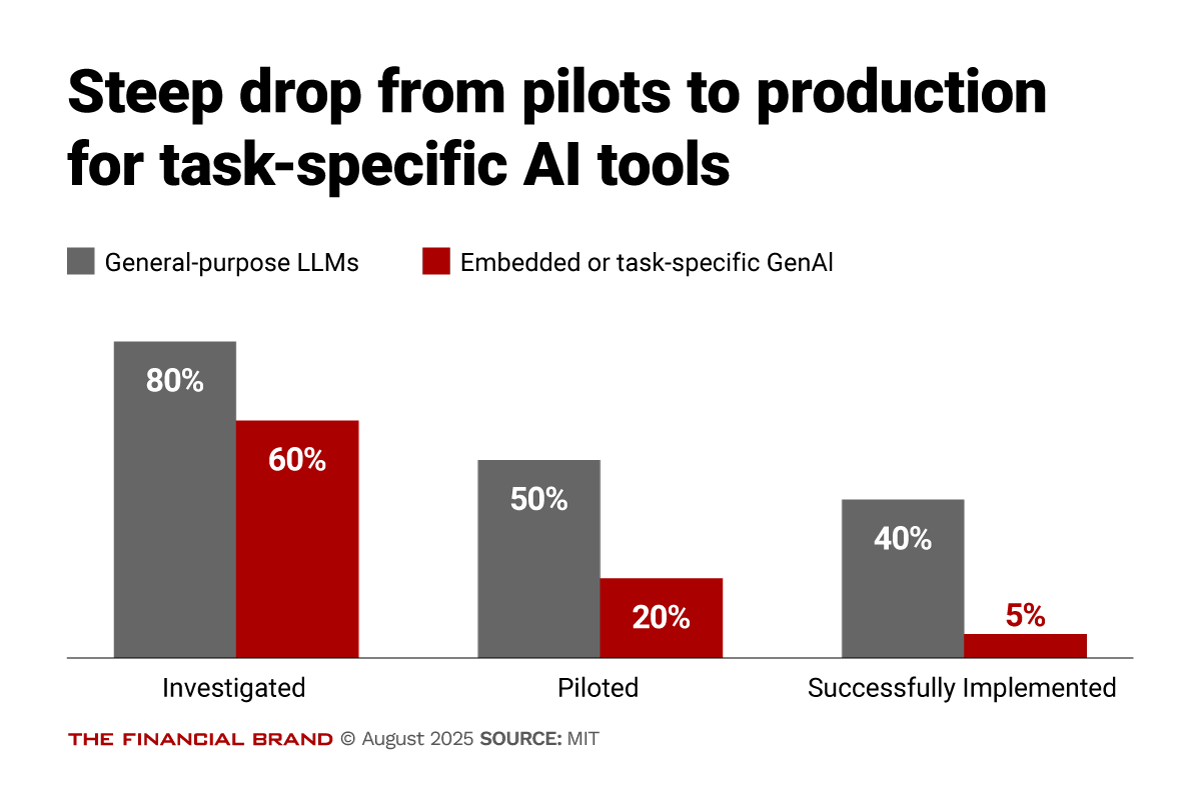

- The GenAI implementation failure rate is staggering, according to a new report from MIT. While 80% of organizations have explored GenAI tools and 40% report deployment, only 5% of custom enterprise AI solutions reach production, creating a massive gap between pilot enthusiasm and actual transformation.

- Investment allocation misses high-ROI opportunities. 50% of GenAI budgets flow to sales and marketing despite back-office automation delivering faster payback periods, with successful implementations generating $2-10M annually in BPO cost reductions.

- Strategic partnerships dramatically outperform internal builds. External partnerships achieve 66% deployment success compared to just 33% for internally developed tools, yet most organizations continue pursuing expensive internal development efforts.

Despite $30 to 40 billion in enterprise GenAI investment, a stunning 95% of organizations are achieving zero measurable return, according to a new report from MIT. The report argues that never before has a technology category attracted such massive investment while delivering such disappointing returns.

This stark separation between a few AI winners and everyone else isn’t driven by model quality or regulation — it’s determined by approach. While tools like ChatGPT achieve 80% organizational adoption, enterprise-grade custom solutions face a brutal reality: only 5% successfully reach production deployment. The core barrier isn’t infrastructure or talent; it’s learning capability. Most GenAI systems lack memory, contextual adaptation, and continuous improvement — the exact capabilities that separate transformative AI from expensive productivity theater.

Organizations on the right side of the divide share common traits: they prioritize external partnerships over internal builds (achieving twice the success rate), focus on learning-capable systems that retain context, and measure success through business outcomes rather than software benchmarks.

The adoption-transformation gap This divide manifests most clearly in the stark difference between AI adoption rates and actual business transformation. Consumer-grade tools like ChatGPT have achieved remarkable penetration, with over 80% of organizations reporting exploration or pilot programs. Nearly 40% claim successful deployment. Yet beneath these impressive adoption statistics lies a more troubling reality: most implementations deliver no measurable profit and loss impact.

The contrast becomes even sharper when examining enterprise-specific AI solutions. While 60% of organizations have evaluated custom or vendor-sold GenAI systems, only 20% progress to pilot stage. Of those brave enough to attempt implementation, a mere 5% achieve production deployment with sustained business value.

The two-speed reality of industry disruption MIT’s comprehensive analysis across nine major industry sectors reveals that genuine structural disruption remains concentrated in just two areas: Technology and Media. The lagging sectors, including financial Services, show minimal structural change despite widespread pilot activity.

The strategic partnership advantage Perhaps the most intriguing finding involves organizational approach. Despite conventional wisdom favoring internal AI development, external partnerships achieve dramatically superior results. Organizations pursuing strategic partnerships with AI vendors reach deployment 66% of the time, compared to just 33% for internal development efforts.

This gap reflects more than simple execution differences. External partners bring specialized expertise, faster time-to-market, and pre-built learning capabilities that internal teams struggle to replicate. More importantly, they offer systems designed from the ground up to adapt and improve—the exact characteristics missing from most enterprise AI initiatives.

The Learning Gap That Defines Success

The fundamental difference between organizations crossing the GenAI Divide and those remaining trapped lies not in technology sophistication or financial resources, but in their approach to learning-capable systems.

Why generic tools succeed and fail The paradox of GenAI adoption becomes clear when examining user preferences. The same professionals who praise ChatGPT for flexibility and immediate utility express deep skepticism about custom enterprise tools. When asked to compare experiences, three consistent themes emerge: generic LLM interfaces consistently produce better answers, users already possess interface familiarity, and trust levels remain higher for consumer tools.

This preference reveals the fundamental learning gap. A corporate lawyer investing $50,000 in specialized contract analysis tools often defaults to ChatGPT for drafting work, explaining: “Our purchased AI tool provides rigid summaries with limited customization options. With ChatGPT, I can guide the conversation and iterate until I get exactly what I need.”

Yet this same preference exposes why most organizations remain stuck. For mission-critical work requiring persistence, contextual awareness, and continuous improvement, current tools fall short. The same lawyer who favors ChatGPT for initial drafts draws clear boundaries: “It’s excellent for brainstorming and first drafts, but it doesn’t retain knowledge of client preferences or learn from previous edits. For high-stakes work, I need a system that accumulates knowledge and improves over time.”

The Memory and Adaptability Crisis

Research reveals a stark preference hierarchy based on task complexity and learning requirements. For simple tasks such as email drafting, basic analysis, and quick summaries, 70% of users prefer AI assistance. But for anything requiring sustained context, relationship memory, or iterative improvement, humans dominate by 9-to-1 margins.

The dividing line isn’t intelligence or capability; it’s memory, adaptability, and learning capacity. Current GenAI systems require extensive context input for each session, repeat identical mistakes, and cannot customize themselves to specific workflows or preferences. These limitations explain why 95% of enterprise AI initiatives fail to achieve sustainable value.

Behind disappointing enterprise deployment numbers lies a thriving “shadow AI economy” where employees use personal tools to automate significant work portions. While only 40% of companies provide official LLM subscriptions, workers from over 90% of surveyed organizations report regular personal AI tool usage for work tasks.

This shadow usage demonstrates that individuals can successfully cross the GenAI Divide when given access to flexible, responsive tools. The pattern suggests that successful enterprise adoption must build on rather than replace this organic usage, providing the memory and integration capabilities that consumer tools lack while maintaining their flexibility and responsiveness.

Dig deeper into AI strategy:

- Four AI Strategies (With Prompts) to Make Your Comms Better and Faster

- Generative AI Can Produce Real-Time Deposit Pricing Strategies

- Where Are the Big Banks Deploying AI? Simple Answer: Everywhere

The Winning Playbook for Crossing the Divide

According to the MIT study, organizations successfully crossing the GenAI Divide share distinctive approaches that separate them from the struggling majority. These patterns offer actionable insights for executives seeking to move their organizations from the wrong to the right side of the divide.

The build vs. buy decision point The data overwhelmingly supports strategic partnerships over internal development. Organizations pursuing external partnerships achieve deployment success rates of 67% compared to 33% for internal builds. This advantage extends beyond simple success metrics to include faster time-to-value, lower total cost, and better alignment with operational workflows.

Successful partnerships typically begin with narrow, high-value workflows before expanding into core processes. Voice AI for call summarization, document automation for contracts, and code generation for repetitive engineering tasks represent common starting points. These applications succeed because they require minimal configuration while delivering immediate, visible value.

Failed implementations often involve complex internal logic, opaque decision support, or optimization based on proprietary heuristics. These tools frequently encounter adoption friction due to deep enterprise specificity and integration requirements that exceed vendor capabilities.

The learning systems imperative Executives consistently emphasize specific priorities when evaluating AI vendors: systems must learn from feedback (66% demand this capability), retain context across sessions (63% require this), and customize deeply to specific workflows. Organizations crossing the divide partner with vendors who deliver these learning capabilities rather than settling for static systems requiring constant prompting.

The most successful implementations feature persistent memory, iterative learning, and autonomous workflow orchestration. Early enterprise experiments with customer service agents handling complete inquiries end-to-end, financial processing agents monitoring and approving routine transactions, and sales pipeline agents tracking engagement across channels demonstrate how memory and autonomy address core enterprise gaps.

The organizational design factor Successful organizations decentralize implementation authority while maintaining clear accountability. Rather than relying on centralized AI functions to identify use cases, they allow budget holders and domain managers to surface problems, evaluate tools, and lead rollouts. This bottom-up sourcing, combined with executive oversight, accelerates adoption while preserving operational fit.

Individual contributors and team managers often drive the strongest enterprise deployments. Many begin with employees who have already experimented with personal AI tools, creating “prosumer” champions who intuitively understand GenAI capabilities and limitations. These power users become early advocates for internally sanctioned solutions.

The Real ROI Hidden in Plain Sight

Despite 50% of GenAI budgets flowing to sales and marketing functions, the most dramatic cost savings emerge from back-office automation. While front-office gains capture attention and board visibility, back-office deployments often deliver faster payback periods and clearer cost reductions.

Front-office vs. back-office returns Best-in-class organizations generate measurable value across both areas, but the distribution surprises many executives. Front-office wins include 40% faster lead qualification and 10% customer retention improvement through AI-powered follow-ups. These gains generate board-friendly metrics and visible customer impact.

Back-office wins prove more substantial: $2-10 million annually in eliminated BPO spending, 30% reduction in external creative and content costs, and $1 million saved annually on outsourced risk management. These savings emerge not from workforce reduction but from replacing expensive external services with AI-powered internal capabilities.

The workforce impact reality Contrary to widespread concerns about mass layoffs, GenAI workforce impact concentrates in functions historically treated as non-core: customer support operations, administrative processing, and standardized development tasks. These roles exhibited vulnerability prior to AI implementation due to their outsourced status and process standardization.

The Investment Misallocation Problem

Investment allocation reveals why many organizations remain on the wrong side of the divide. Sales and marketing functions capture 70% of AI budget allocation despite offering easier measurement rather than superior returns. Back-office functions—legal, procurement, finance—offer subtler but often more dramatic efficiencies.

This bias perpetuates the divide by directing resources toward visible but often less transformative use cases while underfunding the highest-ROI opportunities. Trust and social proof compound this problem, with executives heavily relying on peer recommendations and referrals rather than objective capability assessment.

The GenAI Divide represents more than a temporary market inefficiency. It signals a fundamental shift in how organizations must approach AI adoption. Success requires abandoning traditional software procurement approaches in favor of partnership models that prioritize learning capability over feature completeness.

Organizations currently trapped on the wrong side of the divide face a clear path forward: stop investing in static tools requiring constant prompting, start partnering with vendors offering learning-capable systems, and focus on workflow integration over demonstration impressiveness. The divide is not permanent, but crossing it demands fundamentally different choices about technology, partnerships, and organizational design.